Productive AI solutions

with MLOps

Scaling AI-based products with MLOps in the cloud

You have shown with a proof of concept that your use case works with your machine learning algorithm? And now you plan to develop a productive, marketable product? It's not rare that the supposedly last step ("set the PoC into production") turns out to be a huge leap. For the automated operation a comprehensive infrastructure has to be set up that spans the whole model lifecycle from data preparation to deployment. While you focus on the further development of your algorithm, we take care of integrating it into production operation.

What's especially important for implementing a marketable solution:

Classic software products and AI algorithms are usually advanced incrementally.

For AI applications, however, a team of data science experts tests in parallel a multitude of variants of different input data, parameters, features and models. Each of these variants has to be developed, monitored and historicised.

DevOps meet ML know-how: the complexity, as compared to classical software projects, is increased through the three artefacts code, model, data and their interrelations.

We can provide this specialised knowledge.

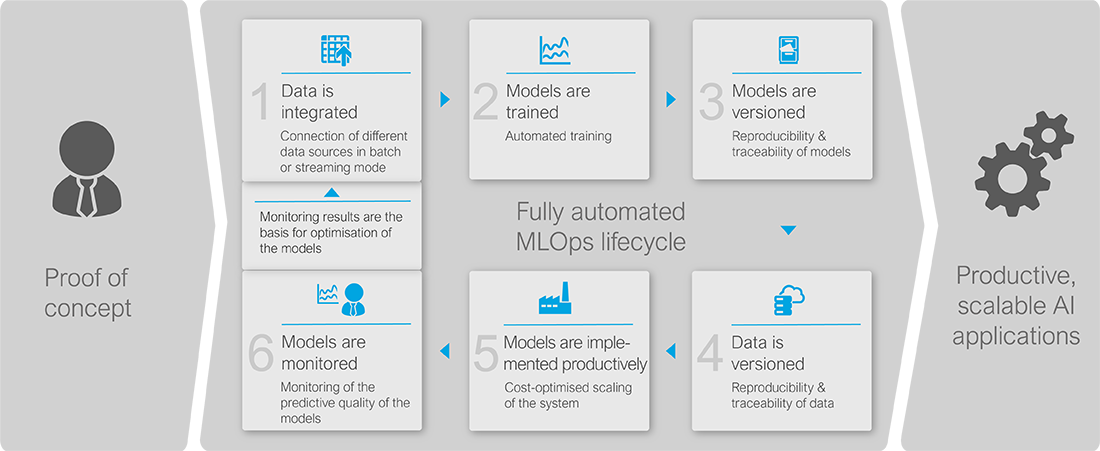

From PoC to a productive AI solution with MLOps

With our professional and structured approach we convert your prototypically implemented AI use case resp. PoC into a productive, usuable software solution.

This is how we handle the MLOps lifecycle: Depending on your individual requirements we would be pleased to assist you with specific or all steps within this process.

Fully automated ML Model life cycle with a fully automated MLOps pipeline

Orderable services - what we offer specifically

Configuration of a scalable/productive/marketable ML application/product:

- Application of Azure Machine Learning, AWS Sagemaker and other services suitable for your use case as well as providing virtually unlimited cloud storage e.g. with Amazon S3 or Azure Blob Storage

A collaborative "data science" development environment:

- for a better scaling

Automated deployment pipeline to automatically set ML models into production:

- e.g. with Kubeflow

Integrated operations monitoring of the ML pipeline:

- from data preparation to model operation e.g. with AWS Cloudwatch or Azure Monitoring and Application Insights

Monitoring of the prediction quality and automated retraining to improve the prediction quality:

- Automated evaluation of prediction quality (e.g. F1-Score, AUC) and detection of changing input data

ML model versioning and historisation

- to achieve reproducible results of machine learning models and applied data

On-demand support to optimise the implemented ML models

- Check of the implemented ML models, evaluation of alternative approaches, identification of potential optimisations

Your advantages with doubleSlash's MLOps solution

You benefit from an experienced IT partner who combines comprehensive AI knowledge with know-how in professional software development. This way you shorten your time to market and relieve your project of a lot of complexity.

We convert your prototypically implemented AI use case resp. PoC into a productive software solution by combining our long-term experience in implementing and operating reliable IT systems with comprehensive know-how in the fields of machine learning and artificial intelligence. This mix of competences in technologies, methods and processes helps you to cost-effectively develop a robust, reliable and scalable AI application in short time.

Scaling ability and short time to market

- With a scalable cloud solution you efficiently and transparently collaborate in distributed teams and at various model variants – even with increasing demand.

- You are able to continuously advance your innovations – with consistently stable AI applications – and swiftly achieve a productive solution.

Reproducibility and auditability

- Results, recommendations and predictions of your AI models can be traced back at any given time – since model versioning makes them exactly reproducible in retrospect.

Automation and scalability

- Dynamic load adaption through automated deployment and scaling.

- Increased efficiency through a high degree of automation.

Reusability

- Standardised processes allow to efficiently provide machine learning models and additional software components in different environments.

- The application of good practices, preconfigured software components and standardised services significantly reduces the development effort.

Keeping an eye on AI applications easily

- Robust machine learning software that works reliably.

- Changes of software and ML models are precisely controlled and retraced.

- Continuous monitoring of the model peformance to identify model deficiancies at an early stage.

Best practices for chatbot development

If you want a chatbot to provide an added value as a smart assistant, the whole concept has to be well thought-out from the outset. But how can a chatbot provide added value to a company and which technologies are applied in a chatbot? Here are our best practices from design to implementation.

How can we support you with a powerful machine learning platform?